- Volt Active Data demonstrated strong performance on Supermicro edge hardware in benchmark tests.

- Single-node tests achieved 348,000 TPS with 5ms latency using a Supermicro E302-12D-8C.

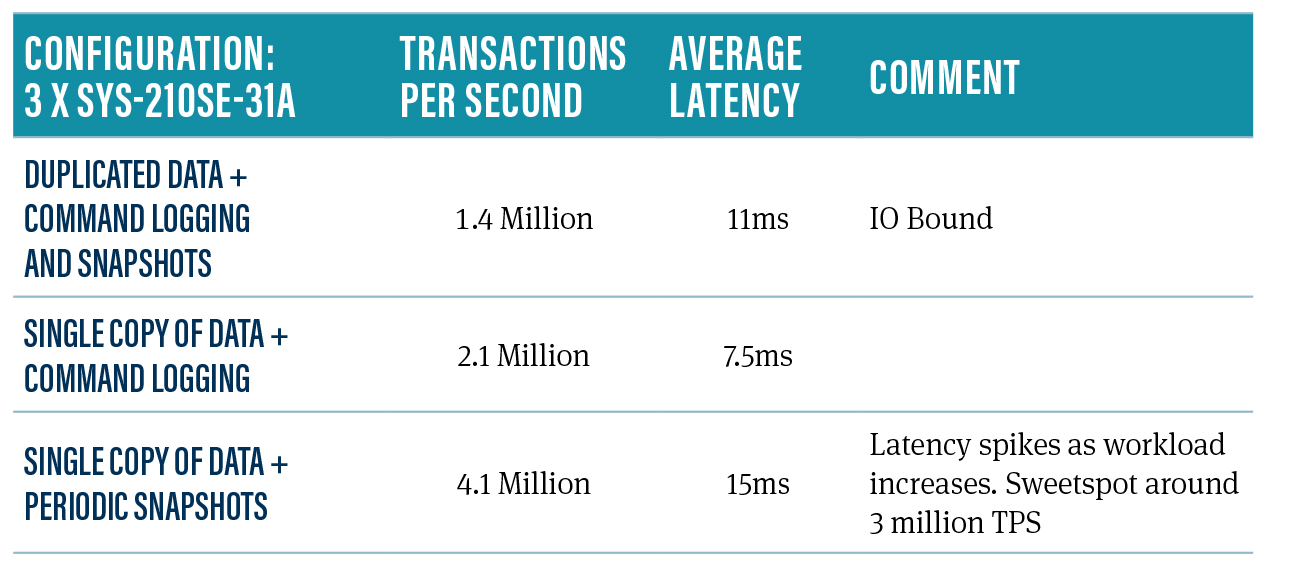

- A three-node cluster using Supermicro SYS-210SE-31A reached 1.4 million TPS with 11ms latency with high availability and command logging enabled.

- Maximum throughput of 4.1 million TPS was achieved with zero spare copies, no command logging, and no snapshots on the three-node cluster.

- Volt combines data processing, storage, and streaming into a single platform optimized for efficient CPU core usage, making it suitable for edge processing with hardware constraints.

TL;DR

We’re excited to announce very strong results from benchmarking Volt Active Data on Supermicro edge hardware.

Supermicro is a global technology leader committed to delivering first-to-market innovation for Enterprise, Cloud, AI, Metaverse, and 5G Telco/Edge IT Infrastructure. They are a rack-scale total IT solutions provider that designs and builds an environmentally-friendly and energy-saving portfolio of servers, storage systems, switches, and software, along with offering global support services.

We tested our reference “Voter” application against the following hardware:

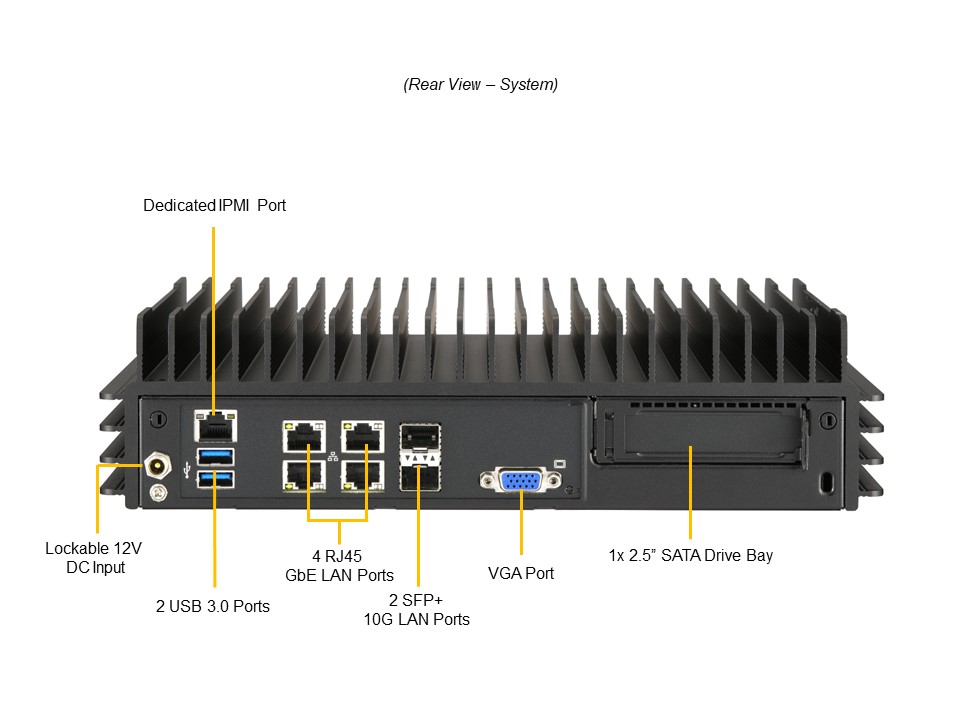

A single deployment consisting of a Supermicro E302-12D-8C, a hardened system intended for industrial and IoT environments.

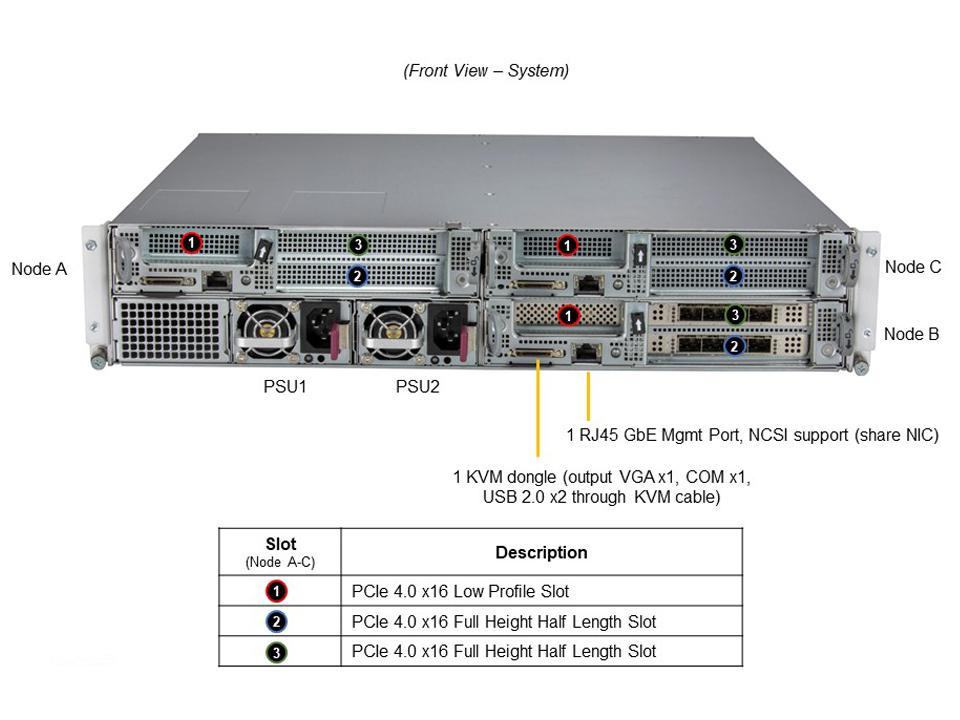

A three-node cluster using Supermicro’s IoT SuperServer SYS-210SE-31A, a 2U server with Ice Lake and 128GB of RAM.

We have a bunch of different reference applications here at Volt, with an emphasis on our longstanding telco practice, which includes charging, our new streaming offering, ActiveSD, and our Java JSR107 Cache. In this case, we used ‘Voter’ because it combines ACID transactions and aggregate data analysis as well as being easy to understand. If you want us to run one of our other benchmarks on this hardware, please contact us and we’ll see if we can get it done.

Single-Node Benchmark Results

Hardware and Software

We used a single Supermicro E302-12D-8C, with an 8-core Intel(R) Xeon(R) D-1736NT processor, 128GB of RAM, and a single M.2 InnoDisk 3TE7 1TB SATA drive.

We were running a single node of Volt 11.4.5, with SitesPerHost of 24.

Workload

We were running the Voter benchmark.

Results

With command logging enabled, we saw 348,000 TPS with the CPU at 60% and an average latency of 5ms, which equates to 43,500 transactions per second, per core. This is an extremely strong result when you consider it’s coming out of a small black box roughly the size of two laptops stacked on top of each other.

Even though Volt is an in-memory data platform, we still write to disk so that we can recover in the event of a power outage. At maximum load, we would, in general, see a 3-ms increase in latency accompanied by a 15,000 drop in TPS while periodic snapshots were being taken. It’s possible this could be mitigated with a higher-spec SSD.

Three-Node Benchmark Results

Hardware and Software

A three-node cluster, with each node being a SYS-210SE-31A, 2U server with 128GB of RAM. 2 2 nodes had a single SamsungPM9A3 960GB SSD. The other node has a Samsung PM983 960GB SSD.

Volt settings were variations on:

- KFactor (extra copies of data) : 0, 1 and 2

- SitesPerHost: Between 8, 16, 32 and 48

- Command Logging: True or False

- Snapshots: True or False

Collectively this gives us good coverage of the different ways we see people using Volt Active Data.

Workload

We were running the Voter benchmark, which will have different performance depending on how Volt is configured.

Results

We started by testing with fully enabled high availability and command logging. We achieved 1.4 million TPS with an average latency of 11ms. If we reduce the workload, latency will be considerably less. This is a realistic real-world configuration. If you disable this because your use case doesn’t need it, Volt will go even faster. This is because Volt Active Data provides high availability by getting more than one node to do the work at the same time, with the number of extra nodes being called the ‘kfactor’. This introduces overhead, as somebody has to coordinate and manage sending all requests to two out of three nodes and sanity-checking the results. The upside is that if a node fails in Volt, the outage is 1-3 seconds, as the survivors have all the data and just have to make sure that the other node is, in fact, dead. Note that if a node fails, the cluster will run at the same speed due to how we implement high availability. If this were a two-node cluster with a ‘kfactor’ of 1 and we lose a node, the surviving node will run faster as it no longer has to bother coordinating with anyone.

Disabling high availability but leaving command logging on led to a significant spike in TPS, up to a still-respectable 2.1 million, with an average latency of 7.5ms, as the system became IO bound. This is because the command log – a list of incoming requests – will create serial IO roughly equivalent to incoming network traffic if you decide to store it and can rapidly saturate IO subsystems. A better SSD would have improved things. Command logging means that the system will recover to within a second (configurable) of the last change in the event of a power outage.

Maximum throughput was seen with zero spare copies, no command logging, and no snapshots. At this point, the cluster peaked at 4.1 million transactions per second and an average response time of 15ms.

Volt + Supermicro: A Powerful Combo for Edge and IoT

So what does this all mean? It demonstrates that Volt runs well on Supermicro hardware in a number of different configurations. Use cases vary, so it’s not logical for us to try to come up with ‘one number’, as we don’t know your requirements for high availability and recovery time or loss from power outages.

Having said that, you should consider the following:

Volt has a proven track record in telco charging and IoT, with hundreds of millions of people relying on what we do.

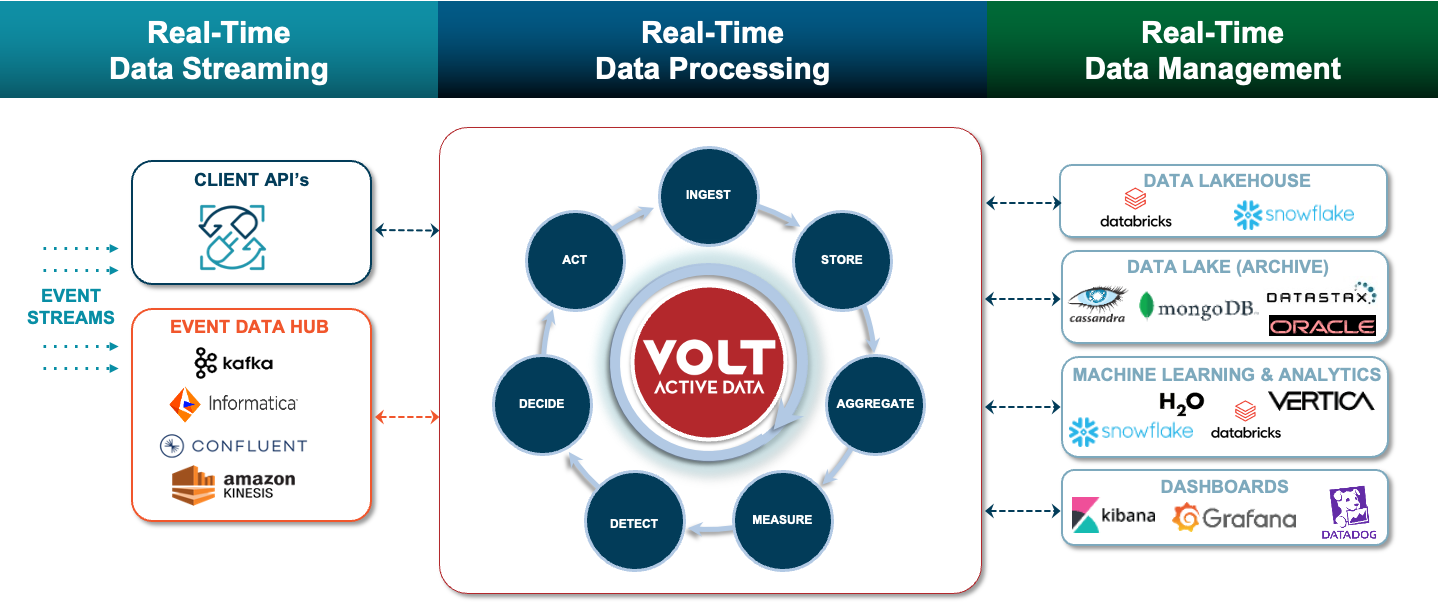

WHERE VOLT SITS IN THE STACK.

Volt’s ‘secret sauce’ is that it combines data processing, storage, and streaming into a single, no-compromises platform that has an architecture that scales by maximizing the work we get out of each CPU core, instead of solving every problem by adding more hardware. In Edge processing, where hardware constraints can be very real, this is incredibly important. Volt has proved to be roughly 9x more efficient than legacy RDBMS and 4x faster than NoSQL technology.

Even though each of the transactions in this benchmark was a fully-fledged ACID transaction, Volt can still do them at scale and with consistent low latency.

Volt also has all the features you’d expect from an enterprise-class data processing and persistence product.

Running Volt Active Data on SuperMicro hardware is a potent configuration. Please contact us or SuperMicro to discuss your use case.