As we move through 2026, three major shifts are converging to fundamentally change how organizations build and operate mission-critical systems. On the surface, they appear distinct. But they share a common thread: the growing gap between generating insight and making authoritative decisions in real time.

1. Streaming Infrastructure Has Reached Maturity, And That’s When the Problems Started

Streaming platforms are no longer experimental. Kafka, Pulsar, and Flink have become standard components of modern architectures, moving massive volumes of data with impressive speed and reliability.

The maturity is real. Organizations now measure event latency in milliseconds, process millions of events per second, and maintain complex topologies that were unthinkable a few years ago.

But here’s the counterintuitive reality: the better streaming gets, the more obvious it becomes that something fundamental is missing.

While the current systems can detect fraud patterns forming in the stream-processing path, they fall short of preventing fraud because they operate in a passive, reactive mode rather than an active, responsive transactional mode. The same can be said of similar use cases in other industries and sectors, such as autonomous networks, offer/campaign management, anti-money laundering, and resource usage tracking.

Each layer of the architecture does its job perfectly and yet fail to meet the business latency SLAs. The architecture is the problem.

Streaming platforms excel at moving data and detecting patterns, but they were never designed to do all three: process the streams, maintain an authoritative operational state, and make decisions transactionally. They are intentionally stateless, optimized for throughput and flexibility, not for decision immediacy. When teams push decision logic into stream processors or downstream services, state becomes approximate rather than definitive. Decision authority drifts across multiple systems. And under sustained load, behavior becomes unpredictable, not because streaming failed, but because streaming alone cannot provide what mission-critical decisions require: transactional decision making against authoritative state.

The paradox: organizations invested in streaming to get real-time control. What they got was real-time visibility into problems after they had happened, but they still could not prevent them. The faster and more mature their streaming becomes, the more painfully obvious this gap between business needs and technical implementation becomes.

What’s changing in 2026: The conversation is shifting from “how many events can we process in a second/minute?” to “how do we make decisions and respond to critical events before they become stale?” This requires a new architectural refactoring, one that shifts decision-making from an eventually consistent stream-processing layer to a data platform that unifies processing, storage, and transactional guarantees. The streaming infrastructure is mature. The decisioning layer is still reactive, though. This needs to change.

2. Agentic AI Has Moved to Production, And Hit Two Fundamental Problems

Agentic AI has moved to production and is no longer confined to demos and pilots. In 2026, AI agents, LLMs that can reason through problems, query data sources, and recommend actions, are being deployed in fraud detection, risk assessment, network operations, customer support escalations, and increasingly, autonomous decision-making.

This operational integration has revealed two problems that must be solved together for agents to work in mission-critical environments.

Problem 1: Agents Hallucinate When They Lack Real-Time Operational Intelligence

The hallucination problem at enterprise scale is not about model quality. It is about data access immediacy.

Most architectures give agents access to passive data through SQL queries against batch warehouses. An agent reasoning about a suspicious transaction queries transaction data after the transaction has completed. The reasoning is sophisticated, but it is too late. Hallucinations increase not because the LLM is broken, but because it is making assumptions about the current state that are no longer accurate. An agent recommending to “block this transaction because the account balance is negative” may be working from data that is hours old.

Agents need to query authoritative, real-time operational intelligence during their reasoning process: current balances, live transaction history, active fraud alerts, and real-time system conditions. When they can, through structured APIs rather than blind SQL, hallucinations decrease dramatically and recommendations become contextually accurate.

Problem 2: Agents Cannot Own Decision Authority in Mission-Critical Systems

Even with perfect operational intelligence, agents cannot safely own decision authority. Agent inference takes hundreds of milliseconds to even seconds, far too slow for every transaction. Probabilistic behavior means the same situation can produce different recommendations. Cost per decision becomes prohibitive at scale. And high agent confidence is not the same as operational correctness.

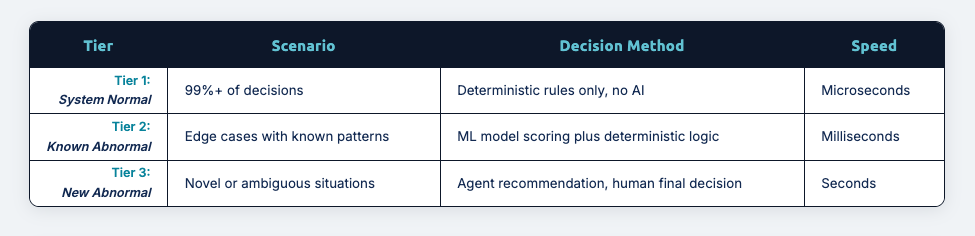

The vast majority of decisions (99%+) are routine and follow known patterns. They should be handled by deterministic rules executing in microseconds, not by agents that reason in seconds. Agents add genuine value in edge cases, novel situations, and ambiguous scenarios, but even there, recommendations need deterministic evaluation before any action is taken.

What’s changing in 2026: Organizations need to build for three tiers of situational responses:

In Tier 3, the architecture matters as much as the decision itself. The agent is invoked to help interpret the situation, actively queries operational intelligence through structured APIs during its reasoning, and provides a recommendation based on accurate, real-time context. The human evaluates that analysis and makes the final call. Critically, the complete interaction chain is captured: what the agent queried, what intelligence it received, what it recommended, and what the human decided and why. This explainability chain feeds directly into ML model retraining and agent fine-tuning.

Over time, “new abnormal” becomes “known abnormal”. The escalation rate decreases as the system learns.

Data Products Are Moving From Insight to Execution

Organizations have invested heavily in analytical data products, including dashboards, predictions, and feature stores, that inform decisions and identify patterns. These are valuable. But there is a growing recognition that they are incomplete.

Analytical data products tell you what happened or what might happen. They cannot tell you what should happen right now, with the authority and speed operational systems require. Data arrives fast, insights are generated quickly, but decisions still happen too late. The fraud has cleared. The limit has been exceeded. The customer has left. This is the last mile problem, and it is becoming impossible to ignore.

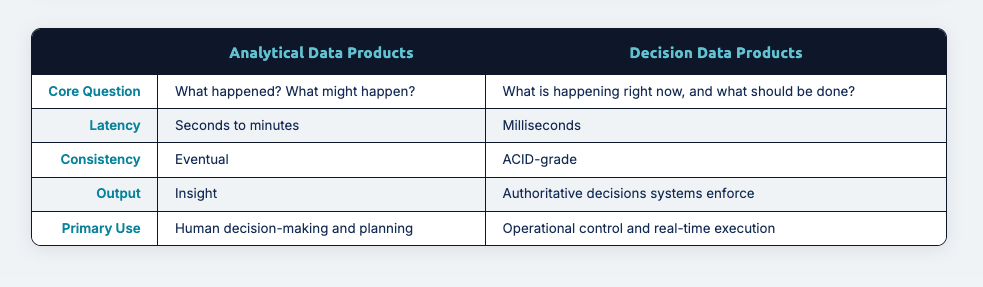

What’s changing in 2026: Organizations are distinguishing between two fundamentally different types of data products:

Organizations are distinguishing between two fundamentally different types of data products:

- Analytical data products answer “what happened?” and “what might happen?” Latency is measured in seconds to minutes. Consistency is eventual. Output is insight.

- Decision data products answer “what is happening right now, and what should be done about it?” Latency is measured in milliseconds. Consistency is ACID-grade. Output is authoritative decisions that systems enforce.

Both are necessary, but they serve different purposes. Analytical data products help you decide. Decision data products make the system decide and provide authoritative truth.

Decision data products become especially critical as organizations deploy agentic AI. Agents need to query operational intelligence during reasoning, and that intelligence must be authoritative and current. A fraud detection decision data product with agent support combines real-time account state exposed via MCP tools, agent reasoning grounded in accurate intelligence, deterministic evaluation of the agent’s recommendation, an authoritative allow/block decision recorded atomically, and a complete explainability chain, all as a reusable, trustworthy product for downstream systems.

The decision data product is not just the final decision. It is the operational intelligence layer agents query, plus the decision authority that makes agent recommendations operational.

The Convergence

What makes these three trends significant is not just their individual impact. It is how they form a natural progression, a continuum from data movement to intelligence to action.

Streaming platforms move data and generate signals. Agentic AI interprets those signals and reasons through complex situations, but only effectively when agents can query authoritative operational intelligence in real-time. Decision data products provide both the operational intelligence agents query and the decision authority that makes agent recommendations operational.

Each stage depends on the previous one, and reveals its limitations:

- Fast data without interpretation is just noise

- Agent reasoning without real-time operational intelligence produces hallucinations

- Smart recommendations without deterministic evaluation are just suggestions

- Decision authority without enforcement is just hope

The organizations getting this right are not treating it as a tooling problem. They are treating it as an architectural problem: streaming for signals, agentic AI for reasoning grounded in operational truth, and a decisioning layer for authority and explainability.

These three trends are not separate initiatives. There are three parts of a single architecture where AI reasoning is grounded in operational truth, and where insight finally becomes action.

If your organization is generating real-time signals but still acting too late, Volt Active Data closes the gap. We turn streaming signals into authoritative, enforced decisions — with full explainability for your AI and your compliance team. Ready to see how it works? Book a demo.